Today DBOS announced a partnership with Databricks and an integration with Databricks Lakebase to make AI agent behavior more reliable, reproducible, and observable.

Building production-ready AI agents is hard because agents can fail in unexpected ways. For example, an agent that queries Unity Catalog tables and serves results to analysts might return a malformed SQL query, invoke the wrong tool when trying to access a table, or generate a misleading summary of the data it retrieved—any of which could lead to bad decisions downstream.

While any program might sometimes do something unexpected, the problem is especially hard for AI agents because they’re fundamentally nondeterministic. The steps an agent takes are determined by prompting an LLM, and there’s no easy way to know in advance how an LLM will respond to a prompt, context, or input.

This nondeterminism makes it hard to reproduce or fix bad behavior, especially in complex or long-running agents. If an agent does something wrong after an hour of iteration, the accumulation of nondeterminism makes the misbehavior nearly impossible to reproduce if you’re starting from the beginning.

When viewed through this lens, misbehaving agents are primarily an observability and reproducibility problem. If the correctness of an agent can only be determined empirically, then the only way to make agents reliable is to be able to reproduce their failures reliably. Then, we can analyze root causes, fix them, and build new test cases and evals.

To solve this problem, DBOS and Databricks are teaming up to help you build agents that are reliable and reproducible by default. The integration layers DBOS, a lightweight database-backed durable execution library, directly underneath your agents, adding fault tolerance and observability that runs entirely on your existing Databricks infrastructure.

What is DBOS?

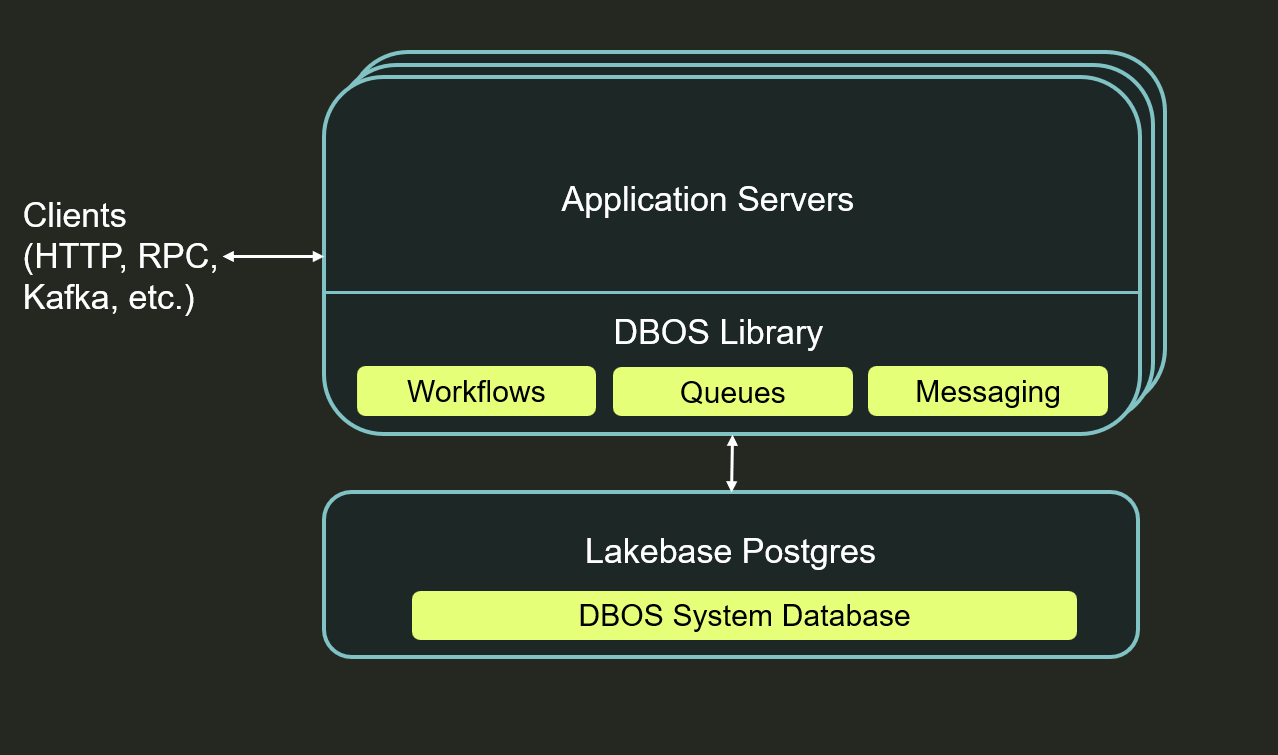

DBOS is a durable execution library that works on top of Databricks Lakebase (or any other Postgres database). It lets you write long-lived, failure-resilient code that survives crashes, restarts, deploys, and transient errors without losing state or accidentally duplicating work.

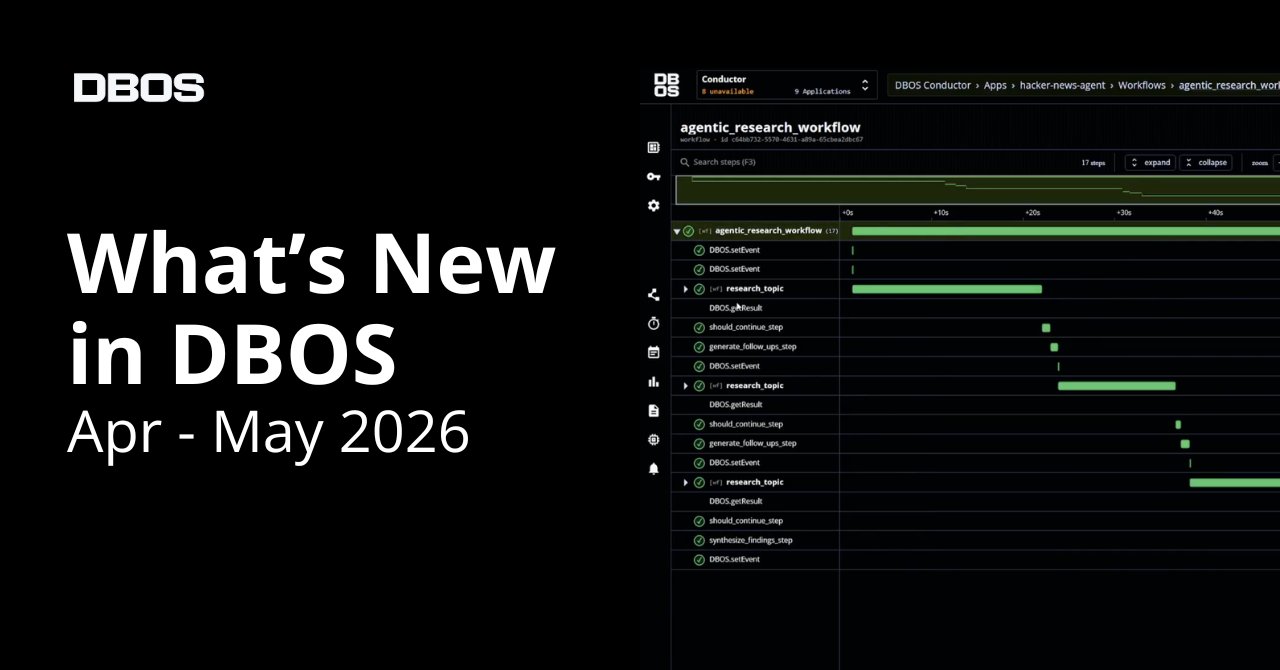

To use DBOS, just install the open source library, annotate workflows and steps in your code (or use integrations with popular agentic frameworks), and run your app as usual. As your agentic workflows execute, DBOS automatically checkpoints their progress into the database. If something goes wrong, your agents can recover and resume execution from the last completed step, not from the beginning.

Architecturally, DBOS is deliberately simple. All durability logic lives inside the library linked into your application. Your database acts as both the source of truth for workflow execution state and the recovery mechanism when failures happen.

How Workflows Make Agents Reliable

Adding durable workflows to your Databricks-hosted agents naturally adds fault tolerance and observability. By checkpointing every LLM and tool call, they create a record of an agent’s progress up to a failure or instance of bad behavior. If a failure is transient (for example, a network glitch or process restart), your agent can use those checkpoints to recover from the last completed step. Otherwise, you can query that record to visualize the agent’s activity and see exactly what the failure was and what state (prompt, context, input) preceded it.

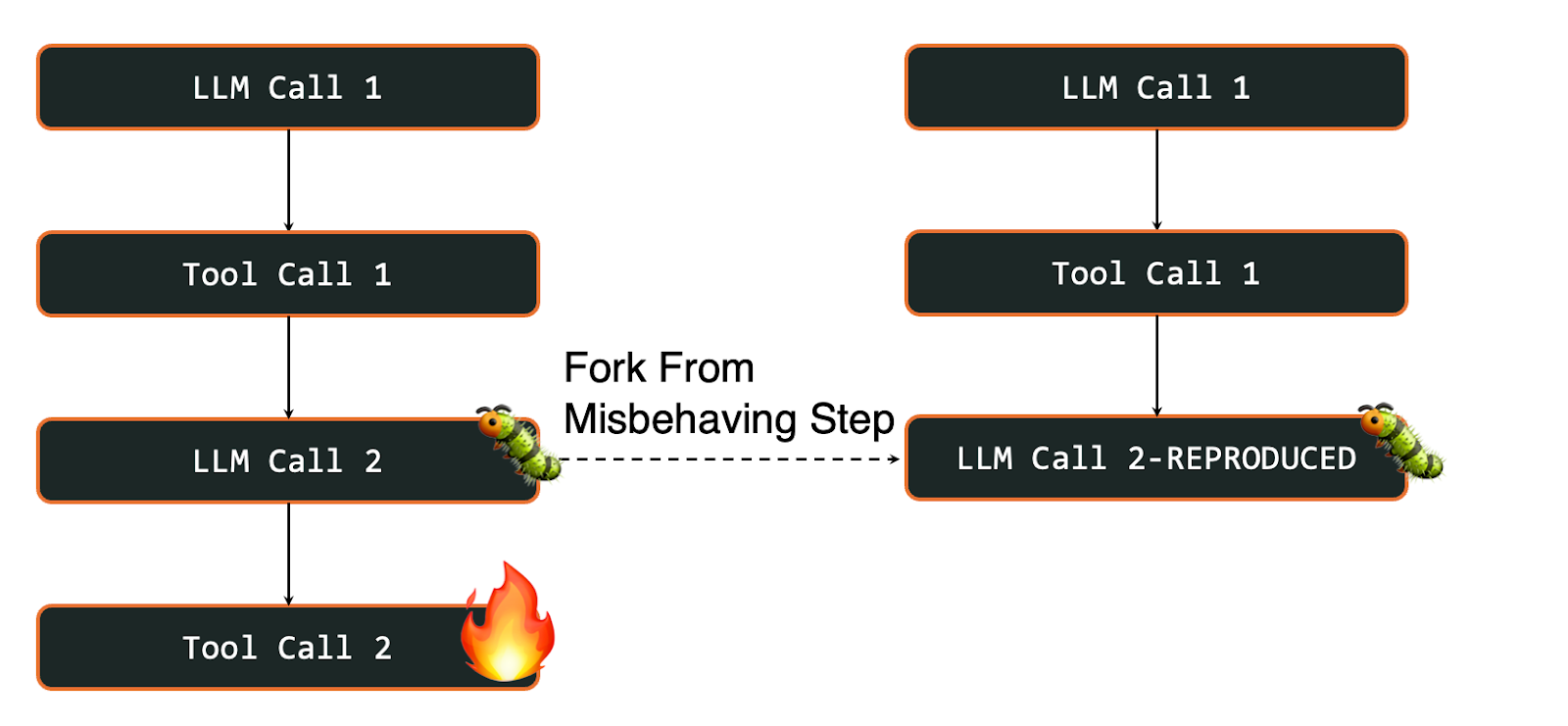

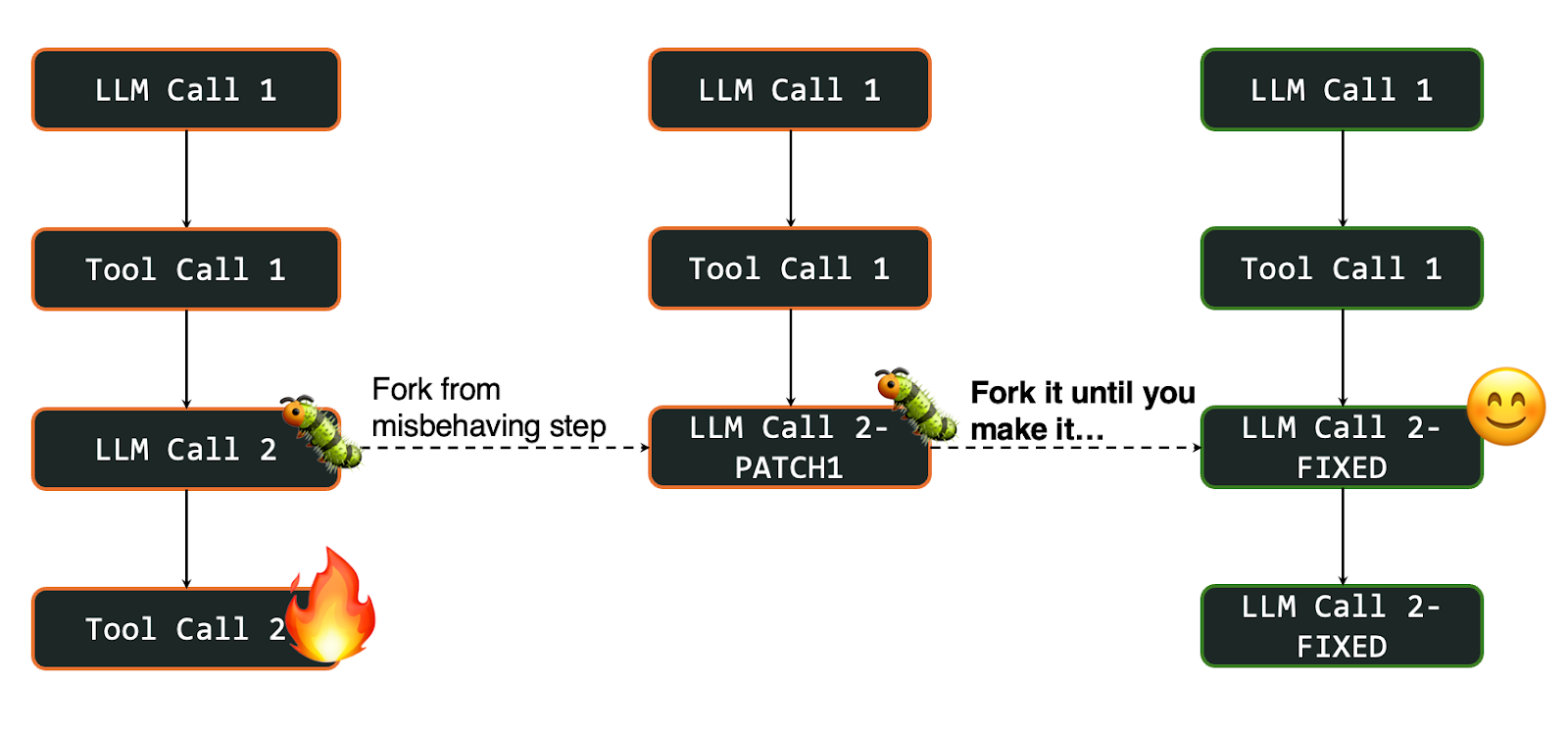

Durable workflows also solve a harder problem than fault tolerance: helping you reproduce and fix deeper issues in your agent’s prompts and tools. In particular, you can fork a workflow to create a copy of it with all its checkpoints up to a specific step, then restart execution from that point. Think of this like a "git branch" for workflow execution, where you create a separate branch that diverges from a specific moment in your agent's run.

One benefit of this reproducibility is that you can rapidly iterate on and test fixes. Once you’ve identified the source of an issue, you can apply a fix to the misbehaving step, fork and restart the workflow from that step, check if the issue was fixed, and repeat until successful. Being able to isolate and iterate on the problematic steps is especially useful for complex agents where testing from scratch would take a long time and burn too many tokens.

For example, imagine a Databricks-hosted agent that queries a Unity Catalog table, transforms the results, then generates a summary for the end user. If the agent produces a misleading summary at step three, you can fork the workflow at that step, preserving the exact query results and transformed data from the earlier steps, and rerun just the summary generation with an updated prompt, without re-executing the costly data retrieval and transformation that preceded it.

Essentially, you use workflows to impose determinism on fundamentally nondeterministic agents. Checkpointing every step an agent takes in a database makes it possible to reconstruct the agent’s state at any point in time, which makes it much easier (and costs far fewer tokens) to figure out why the agent is doing something weird and fix it.

Running Durable Agents on Databricks

Because DBOS is a lightweight library built on Postgres, you can easily add it to your existing Databricks-hosted agents. For example, this demo on GitHub shows you how to add DBOS to a Databricks-hosted agent built with the OpenAI Agents SDK.

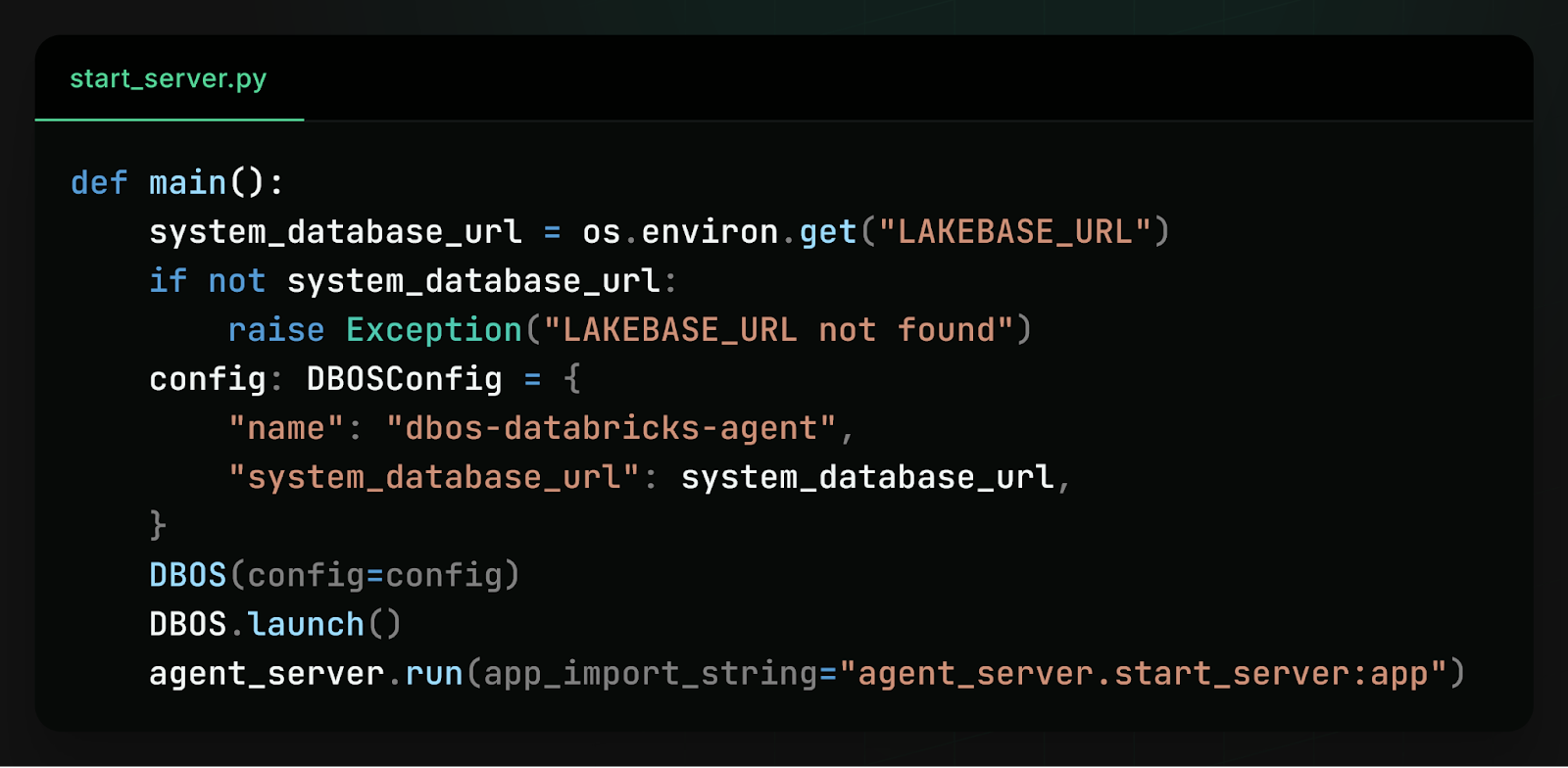

First, we launch DBOS in the agent’s main function, connecting it to a Lakebase database by following this tutorial:

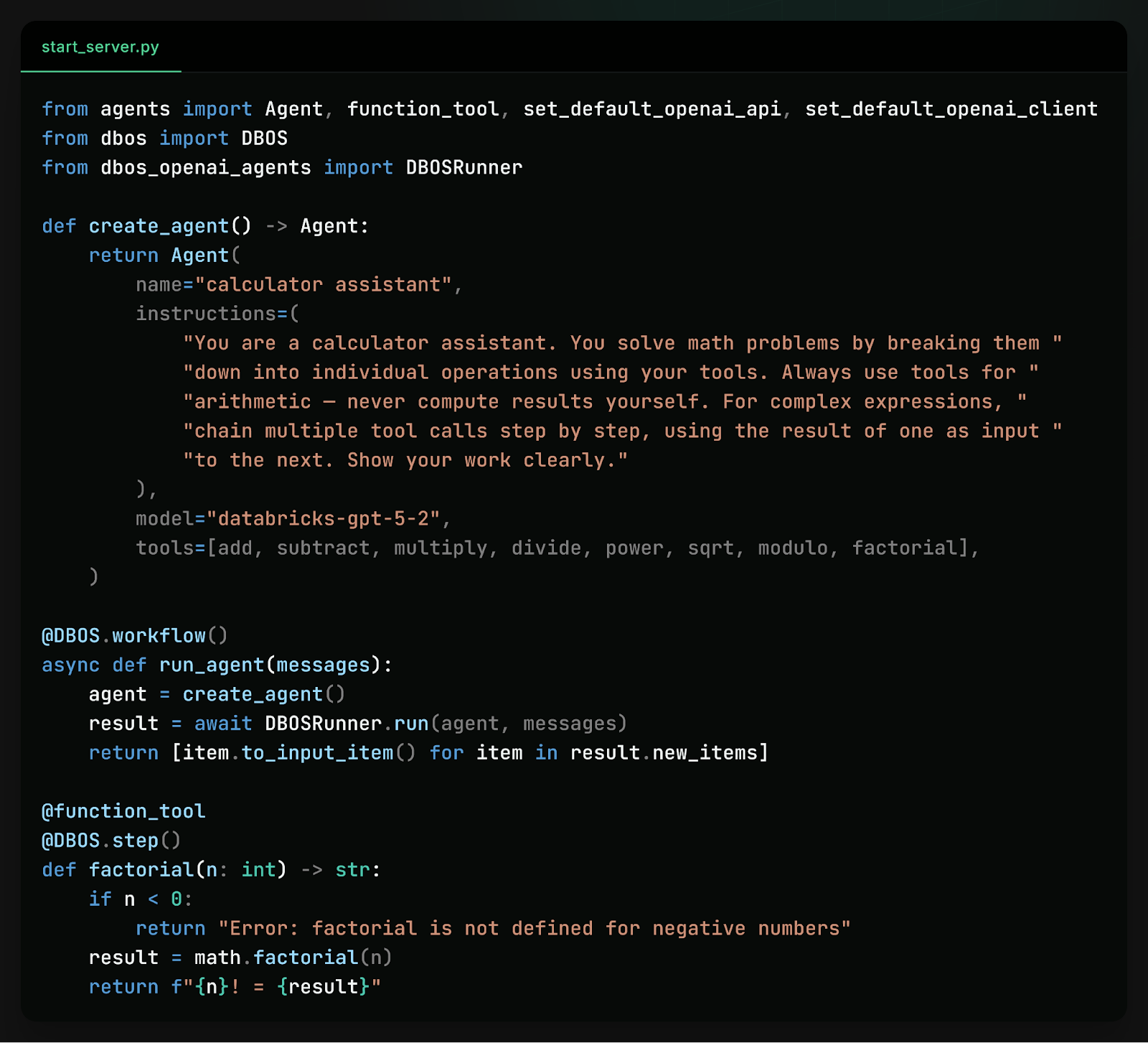

Then, we use the native integration with the OpenAI Agents SDK to make our agent durable, annotating the agent’s entry point as a durable workflow and each of its tool calls as a step:

With those changes, the integration is done, and we can deploy our now-durable agent as a Databricks application.

Learn More

If you'd like to make AI agents durable on Databricks, here are some resources to help get you started:

- Quickstart: https://docs.dbos.dev/quickstart

- Docs: DBOS - Lakebase integration

- Docs: DBOS - Neon integration

- GitHub: https://github.com/dbos-inc

- Discord community: https://discord.gg/eMUHrvbu67